I’ve been using an Amazon Kindle for a few days, and had occasion a couple months ago to use a newer Kindle 2 for a few days as well. The device is wonderful and terrible all at once. I enjoy using it immensely, except for how painful it is. It is first electronic device I have felt truly conflicted about.

The Kindle is an electronic reading device the size of a small hardback and half the thickness. It is a mess of plastic edges and buttons, with a little keyboard across the bottom composed of chicklet sized keys, big silly page-turning buttons on the sides, and no way to really hold it comfortably. In the middle is a moderately sized “e-ink” display that provides a high-contrast reading surface similar to ink on paper. The newer Kindle 2 is a bit thinner, much more ergonomic, and uses a better interface for navigating through content, but is otherwise quite similar.

The Kindle is an electronic reading device the size of a small hardback and half the thickness. It is a mess of plastic edges and buttons, with a little keyboard across the bottom composed of chicklet sized keys, big silly page-turning buttons on the sides, and no way to really hold it comfortably. In the middle is a moderately sized “e-ink” display that provides a high-contrast reading surface similar to ink on paper. The newer Kindle 2 is a bit thinner, much more ergonomic, and uses a better interface for navigating through content, but is otherwise quite similar.

Because the device does not use a conventional display, its battery can last for a few weeks. Because it has built-in Sprint wireless, it can sync and download books automatically. That’s a neat trick.

All Kindle content purchased through Amazon (and it can only be purchased through Amazon) is protected by extremely onerous copy preventing measures. One does not “buy” a book on the Kindle, but rather buys a “license.” Books on the Kindle cannot be shared, loaned, resold, or returned. And Amazon can “revoke” a license for any reason, wiping the book from your Kindle without prior notification or consent. The thought of buying anything to put on the Kindle sickens me, because it feels like something straight out of 1984.

At the same time, the allure of instant “buy it now” satisfaction, and the fact that the DRM restrictions have already been broken does provide some comfort. Not a lot, but I could see myself in a moment of weakness, or just prior to a long trip, breaking down and clicking the buy button. And then promptly removing the DRM, of course.

I’ve loaded my Kindle with a dozen free books. Most of them are old and out of copyright, provided courtesy of Project Gutenberg, a service that digitizes old books. One book my mom purchased, one was a promotional offer. The purchased books, in general, are formatted a bit better, but on the whole the experience is pretty disappointing. Everything is displayed in one font. The kerning is fine, but I wish I could adjust the line spacing. Instead of pages Amazon uses some sort of strange sectioning system, so that if the font is scaled larger or smaller, your place stays the same. The device has no backlight, so you need a book light (how old fashioned!) to read it in the dark. And as I’ve already said, the device is very difficult to hold comfortably, even when mounted in its provided leather cover. Although the Kindle 2 is a lot better in this regard.

I’ve been throwing the Kindle in my bag and taking it everywhere I go. I keep finding myself reading. At work, during lunchtime. On the T. Around the house. When I’m waiting for something or someone. It is nice to always have some books present, and to be able to effortlessly and instantly switch between them. It is nice that my “book” is of a standard size, no matter the length of the text.

I bought an iPhone application a while ago called “Classics” that contains nicely formatted texts of several classic books that are in the public domain. Despite the iPhone’s small screen, the reading experience is not unduly painful, and I’ve used my phone to read Gulliver’s Travels and The Jungle Book. On the Kindle I’m currently reading The Island of Doctor Moreau. There is a lot of good, free stuff out there. I could keep doing this for a while. And for classics that Amazon has formatted and added to their Kindle store (purchasable for $0.00), the place that I stop reading on the Kindle will actually synchronize automatically with the Kindle application for the iPhone. Sadly, that doesn’t work for books I load onto the Kindle through means other than Amazon.

The end result is, I’m really enjoying my little electronic reading device, despite all its flaws. I wouldn’t pay $300 for it, but I didn’t have to, because my mom never used it and was persuaded to give it to me. But what I have decided to do, in my typically silliness, is eBay it and apply the profits to a Kindle 2. Sorry, Mom. The Kindle 2 doesn’t solve all of my complaints, especially the most important one, about the DRM, but it does make the experience somewhat more pleasant, and I think the $100 or so upgrade price is worth it.

I can’t recommend that anyone go out and buy a Kindle. I can’t get behind what Amazon is doing with their Kindle store and their draconian restrictions, although I can hope that things will improve with time. What I can say is that I think technological progress, the marketplace, and consumer opinion has finally converged to the point where this sort of device is feasible, practical, and in some cases desirable. So we’ve made some progress.

The Kindle is an electronic reading device the size of a small hardback and half the thickness. It is a mess of plastic edges and buttons, with a little keyboard across the bottom composed of chicklet sized keys, big silly page-turning buttons on the sides, and no way to really hold it comfortably. In the middle is a moderately sized “e-ink” display that provides a high-contrast reading surface similar to ink on paper. The newer Kindle 2 is a bit thinner, much more ergonomic, and uses a better interface for navigating through content, but is otherwise quite similar.

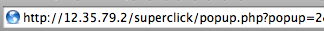

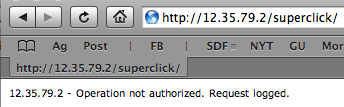

The Kindle is an electronic reading device the size of a small hardback and half the thickness. It is a mess of plastic edges and buttons, with a little keyboard across the bottom composed of chicklet sized keys, big silly page-turning buttons on the sides, and no way to really hold it comfortably. In the middle is a moderately sized “e-ink” display that provides a high-contrast reading surface similar to ink on paper. The newer Kindle 2 is a bit thinner, much more ergonomic, and uses a better interface for navigating through content, but is otherwise quite similar. I first noticed that between each page view I would get a little white flash. Then when I went to the New York Times, I discovered that I couldn’t click through to the second page of articles — I would just get redirected back to the first page.

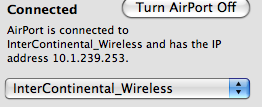

I first noticed that between each page view I would get a little white flash. Then when I went to the New York Times, I discovered that I couldn’t click through to the second page of articles — I would just get redirected back to the first page.

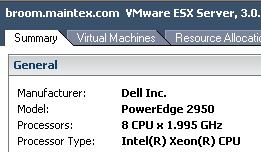

Too bad the inaugural virtual machine is going to be running SCO. Oh well. It’s great that these days you can buy one moderately priced, very powerful machine and use it to run all the services you need for a medium-sized business in a secure and stable way, and then later expand to add capabilities like high availability. This isn’t news to people up to speed on virtualization technologies, but I’m still easing into the awesomeness.

Too bad the inaugural virtual machine is going to be running SCO. Oh well. It’s great that these days you can buy one moderately priced, very powerful machine and use it to run all the services you need for a medium-sized business in a secure and stable way, and then later expand to add capabilities like high availability. This isn’t news to people up to speed on virtualization technologies, but I’m still easing into the awesomeness. I’m getting a bit low on hard drive space and had a very strong desire to replace my large general-purpose Linux server with a smaller dedicated appliance (it was an irrational desire, but these things happen and you just have to go with them). After much agonizing I picked up an

I’m getting a bit low on hard drive space and had a very strong desire to replace my large general-purpose Linux server with a smaller dedicated appliance (it was an irrational desire, but these things happen and you just have to go with them). After much agonizing I picked up an